Last week, head of Data Engineering, Deep Varma, laid out some of Trulia’s relevancy challenges, and among them is optimization. With photos being vital to searching and discovering homes, this post describes how we’re optimizing content for consumers through image recognition technology.

Trulia has one of the largest collections of real estate photos in the U.S., receiving more than a million new photos from new listings every day. In order to ensure consumers are seeing the most engaging and relevant content, we need to understand the elements of each photo on Trulia.

Addressing the Opportunity

Understanding photo properties opens a world of opportunity, but, unlike most other property information, photos don’t come with metadata. Currently, one of the most common ways to generate this information is through crowdsourcing, where human labelers annotate photos, but the process can be extremely slow.

Alternatively, you can teach machines to recognize photos. With recent advances in computer vision, driven by Deep Learning and success on large-scale image recognition tasks like Image Net, machines are getting smarter at understanding images.

At Trulia, we want photo attributes to get surfaced as soon as a property becomes available. Given the volume of photos we receive every day, crowdsourcing isn’t a feasible solution. This led us to develop our own image recognition capabilities.

Our algorithms involve a mix of approaches from Machine Learning, Computer Vision and Natural Language Processing, which leverage information from both property descriptions and photos.

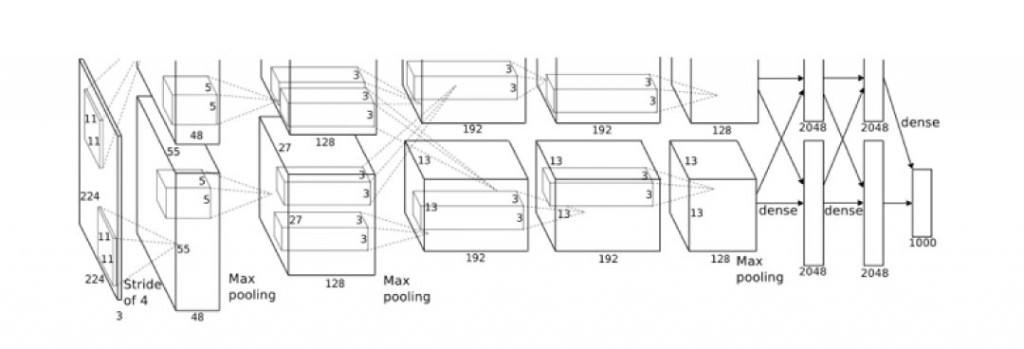

For image recognition, we use a combination of techniques, including traditional Bag of Visual Words (BoVW) approaches, as well as current state-of-the-art approaches using Deep Learning and Convolutional Neural Networks (CNN).

The CNN architecture by Krizhevsky et al that won the ImageNet challenge (ILSVRC) in 2012

While traditional machine learning requires handcrafted features, Deep Learning models discover multiple levels of features directly from the data, enabling end-to-end learning.

One of the most popular architectures in Deep Learning is CNN, which has state-of-the-art performance in several computer vision tasks, including object recognition and scene classification. CNN comprise multiple layers of convolution, pooling, and rectification where the parameters of each layer are learned in an end-to-end fashion to optimize performance on a task, given some training data. We use CNN to drive our scene classification, image appropriateness, and image quality measures.

The Benefits of Understanding Photo Content

Our image recognition pipeline allows us to automatically annotate more than a million new photos every day with a rich set of attributes that capture information like room types (e.g. cellar, kitchen, living room) and room characteristics (e.g. granite countertops, hardwood floors), and help serve this information to various downstream applications. We can also measure image quality, attractiveness, and appropriateness.

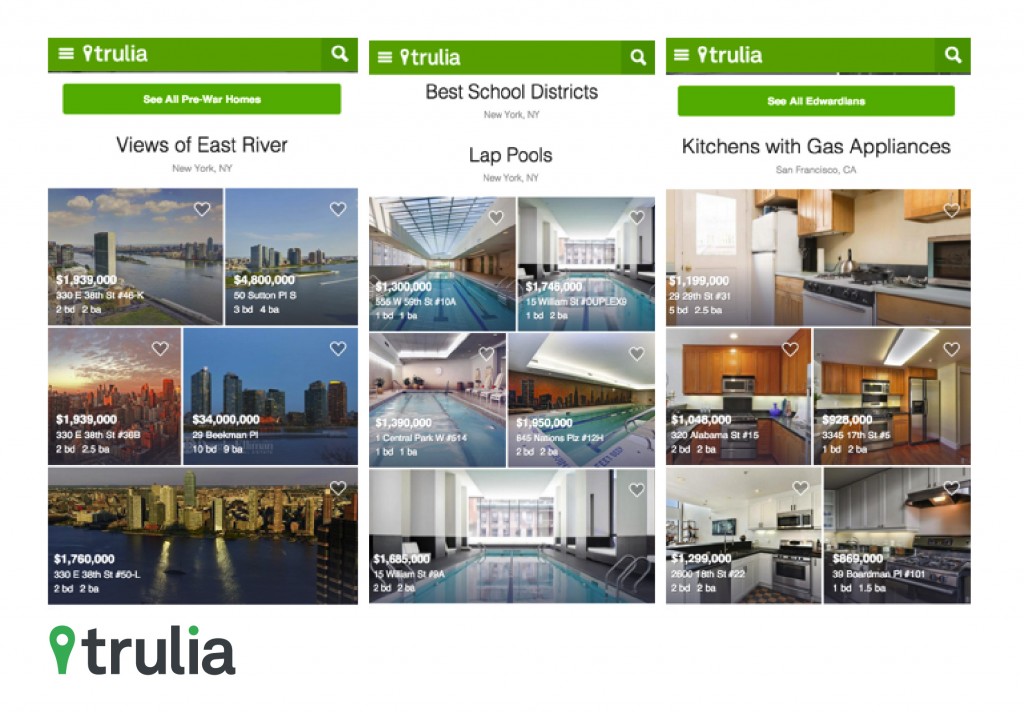

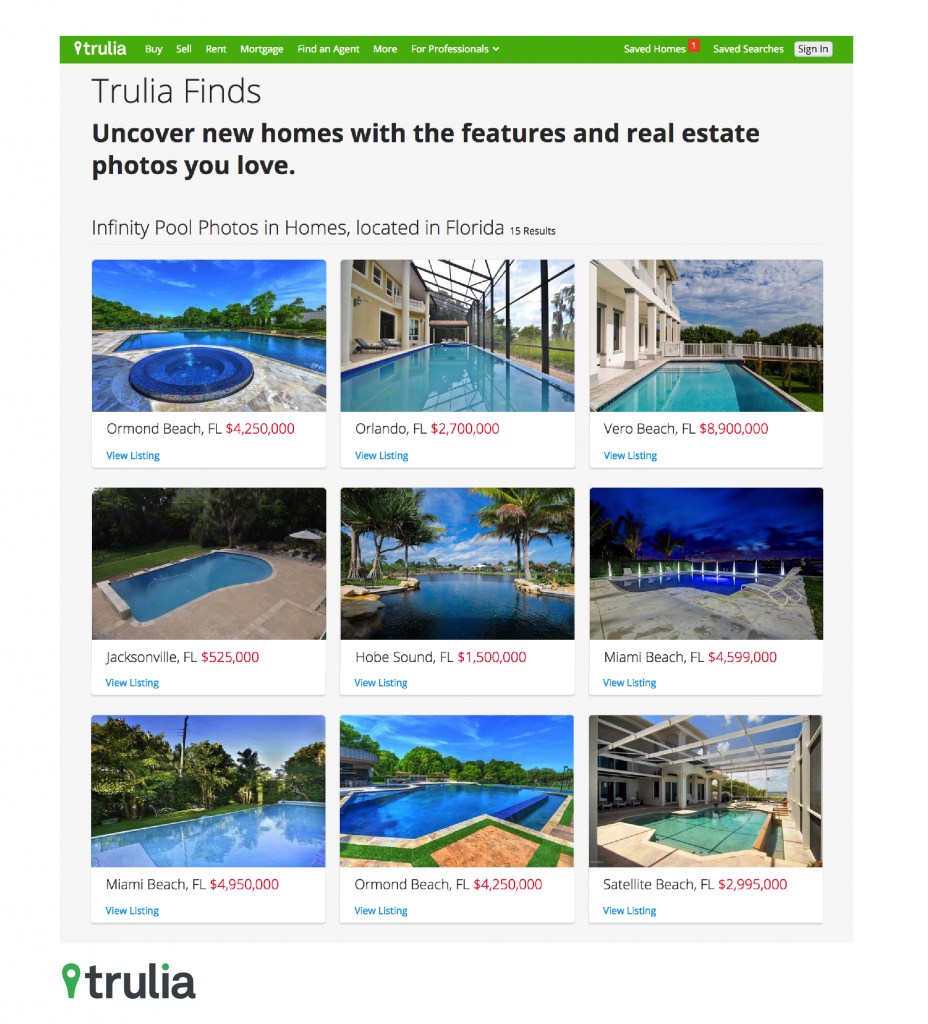

Our image recognition capabilities are already driving products like “Discovery,” where people are shown customized modules around home features like “Infinity Pools.” In these applications, we’re already seeing increased user engagement.

We’re also working to better organize how we display property photos, which helps us generate customized photo collections, like “Homes with Cellars in Texas” or “Homes with Infinity Pools in Florida.” In turn, giving consumers an easy and more enjoyable search experience.

These are just a few use cases where understanding photo properties can help drive a better product experience. Several applications are in the works to extract even more information from our photos to further enhance Trulia’s user experience.